Data Modernization – What is the best route for your transformation journey? (Part 2)

So, you have taken the decision to go in for a data modernization exercise, which befits any forward-thinking organization. That’s the good news!

The question now is what is the way forward? What is the most appropriate model for your organization?

The truth is that there is no one-size-fits-all solution. Over the last decade, Data Lakes grew to be the de facto model for modernization. These days, they are being supplanted by, or in many cases have been subsumed into, Data Meshes. Both models have their votaries, and both come with their own set of challenges.

Let us examine these two models in a little more detail so that you can wrap your mind around them more easily and be better positioned to choose between them.

The Data Lake

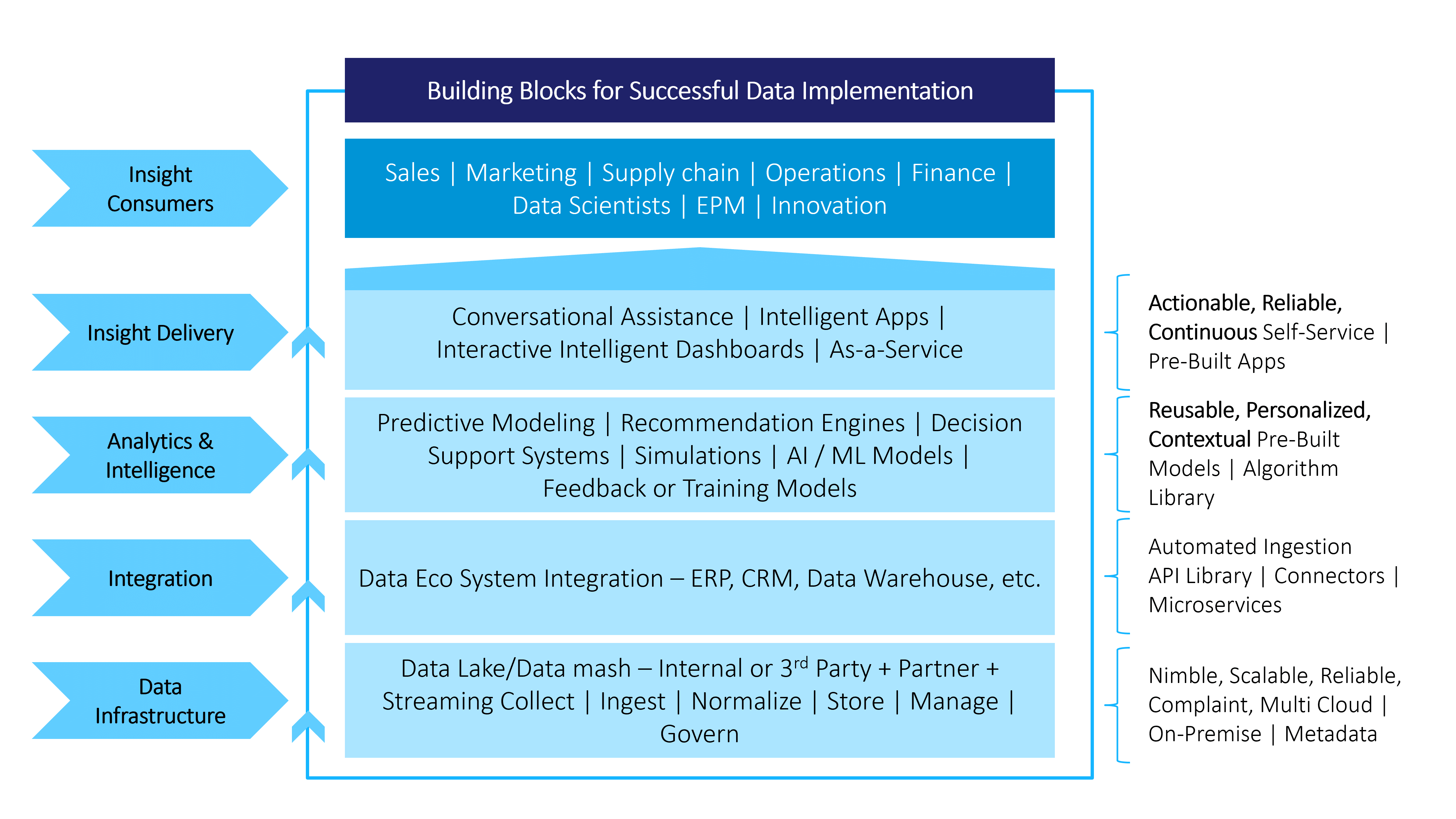

A Data Lake is a large reservoir into which raw data can be poured and stored until needed. Thanks to its flat architecture, it stores data in its native format, as binary large objects (blobs) or files. It takes in unstructured data, such as emails, documents etc.; binary data like images, audio, and video; semi-structured data, such as CSV, logs, and XML; and structured data from relational databases. The extract-transform-load process happens within the Data Lake itself.

The Data Lake can, therefore, efficiently manage the high Volume, high Variety, and high Velocity of Big Data. It also significantly enhances the value of Big Data by making it available as reports, dashboards, and applications, to facilitate better visualization, advanced analytics, and machine learning. All, of course, to ultimately empower organizations with the ability to take evidence-supported business decisions with more far-reaching impact than ever before.

Being a single, integrated, and complete system, the Data Lake facilitates faster and simpler development of applications as well, which are based on one code.

The Data Lake can reside on the cloud, on a platform such as Microsoft Azure, or as a distributed file system such as MS SQL Server with the Hadoop Distributed File System.

However, Data Lake also has its drawbacks.

As the volume of data increases and grows more complex, the central IT function becomes overloaded with requests and cannot keep pace. Individual project teams then try to bypass it and deploy quick fixes that are poorly integrated and create problems in the future.

What is worse, organizations keep pouring data into the Lake and eventually lose track of what it contains. Much valuable information can go unnoticed because data analysts have no knowledge vis-à-vis the data’s source domain and engage in fishing expeditions.

Many organizations have seen their Data Lakes turn into data swamps because, after a point, it entails considerable technical and organizational effort to make productive use of them.

The Data Mesh

The Data Mesh evolved in response to the many challenges that the Data Lakes posed.

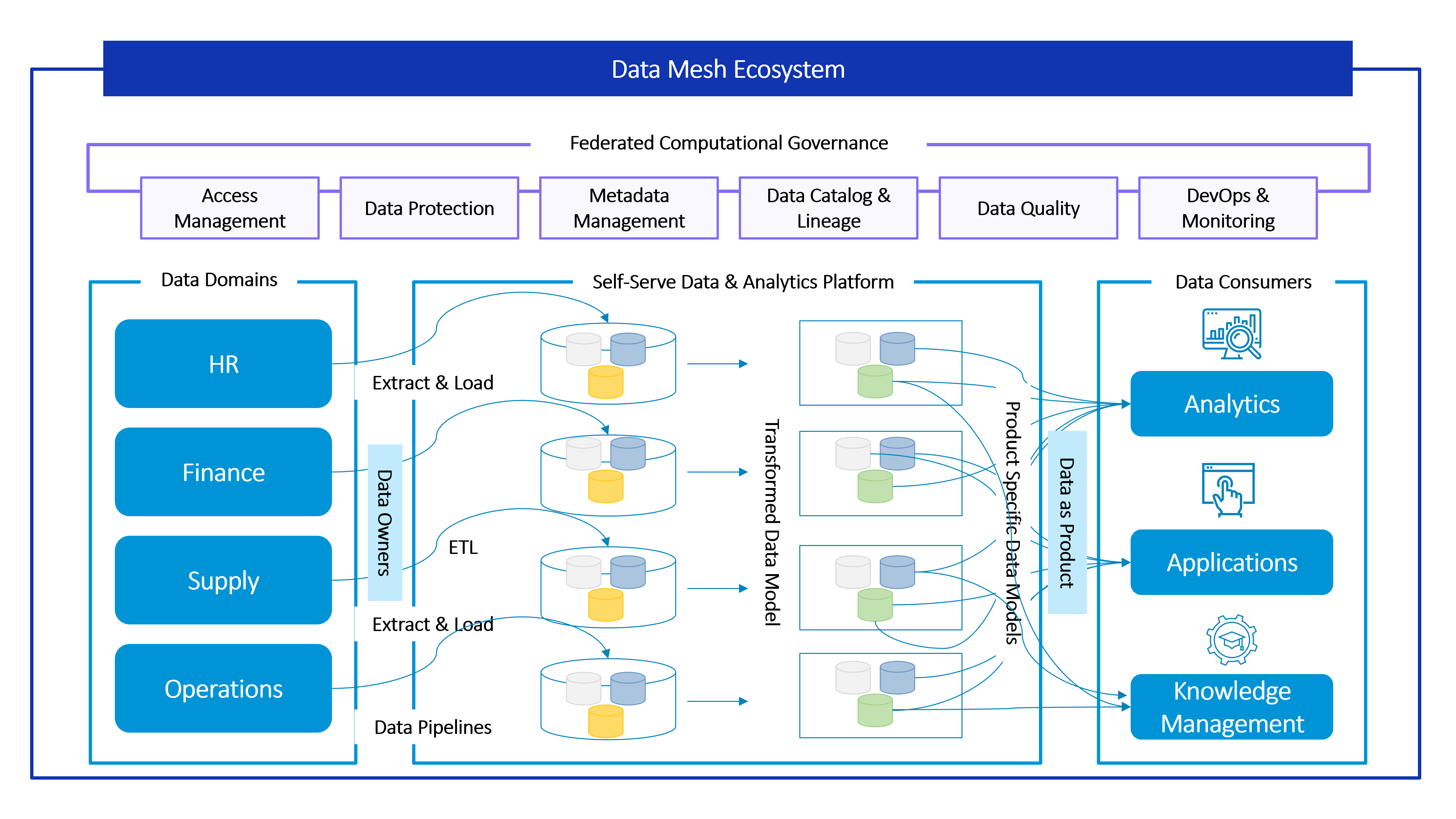

Unlike the Data Lake, the Data Mesh is a composite ecosystem, not a monolith. It breaks giant, monolithic enterprise data architectures into decentralized subsystems, each owned and managed by a dedicated team.

The Data Mesh facilitates the management, connection, and smooth flow of data from producers through to consumers, whether outside or within a Data Lake. In that sense, a Data Mesh may include Data Lakes.

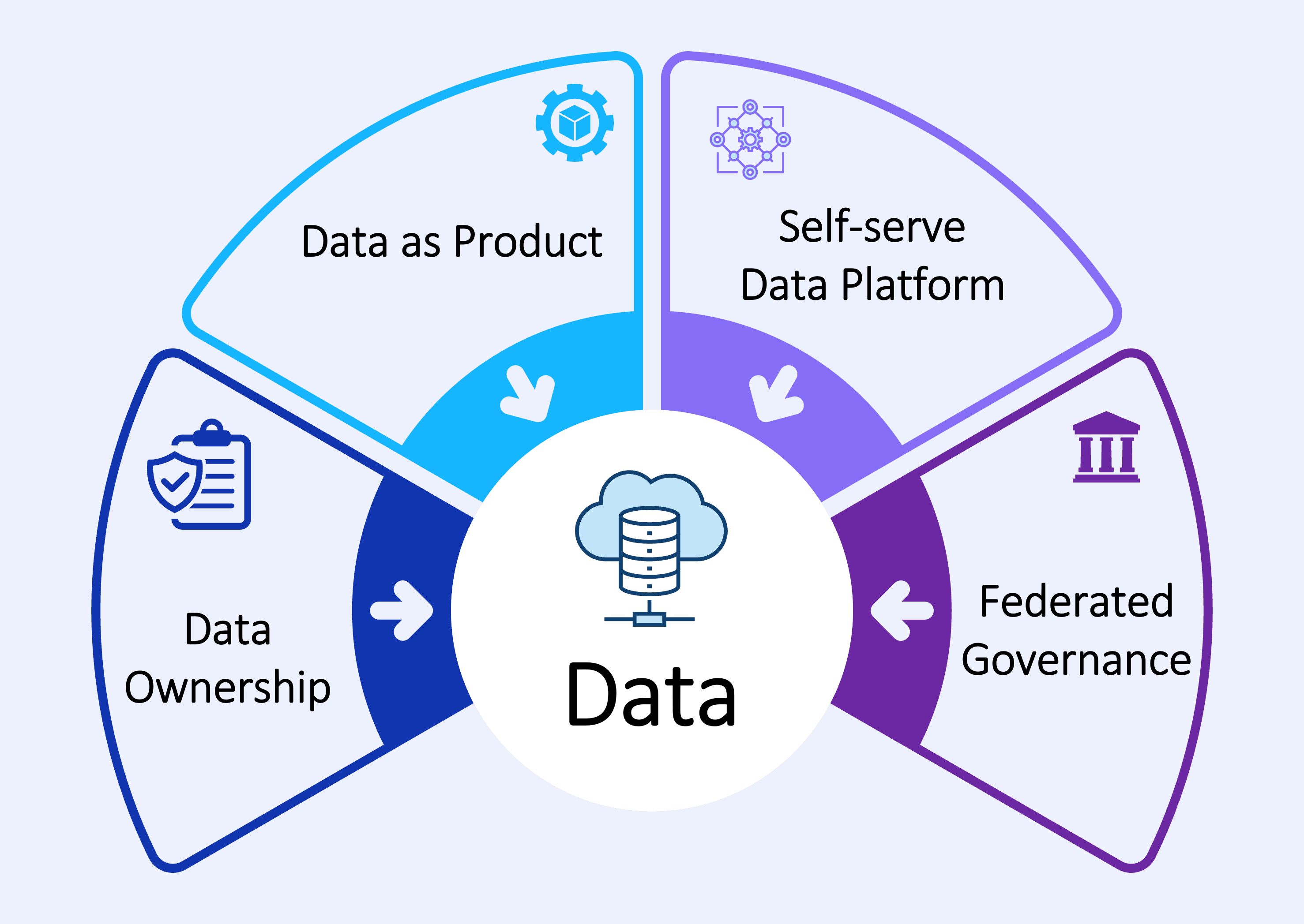

Data Meshes can be said to have four pillars:

Decentralized Data Ownership

Data is owned by the entity that produces it, typically functions such as HR, Finance, Marketing, etc. Therefore, more value can be derived from it. Typically, tools such as Azure Databricks are used to process the large workloads of data.

Data as Product

Users, such as data analysts, can easily source data directly from the domain owners, who will ensure that the data is of high quality. Conflicts are eliminated by using approaches like event sourcing and CQRS.

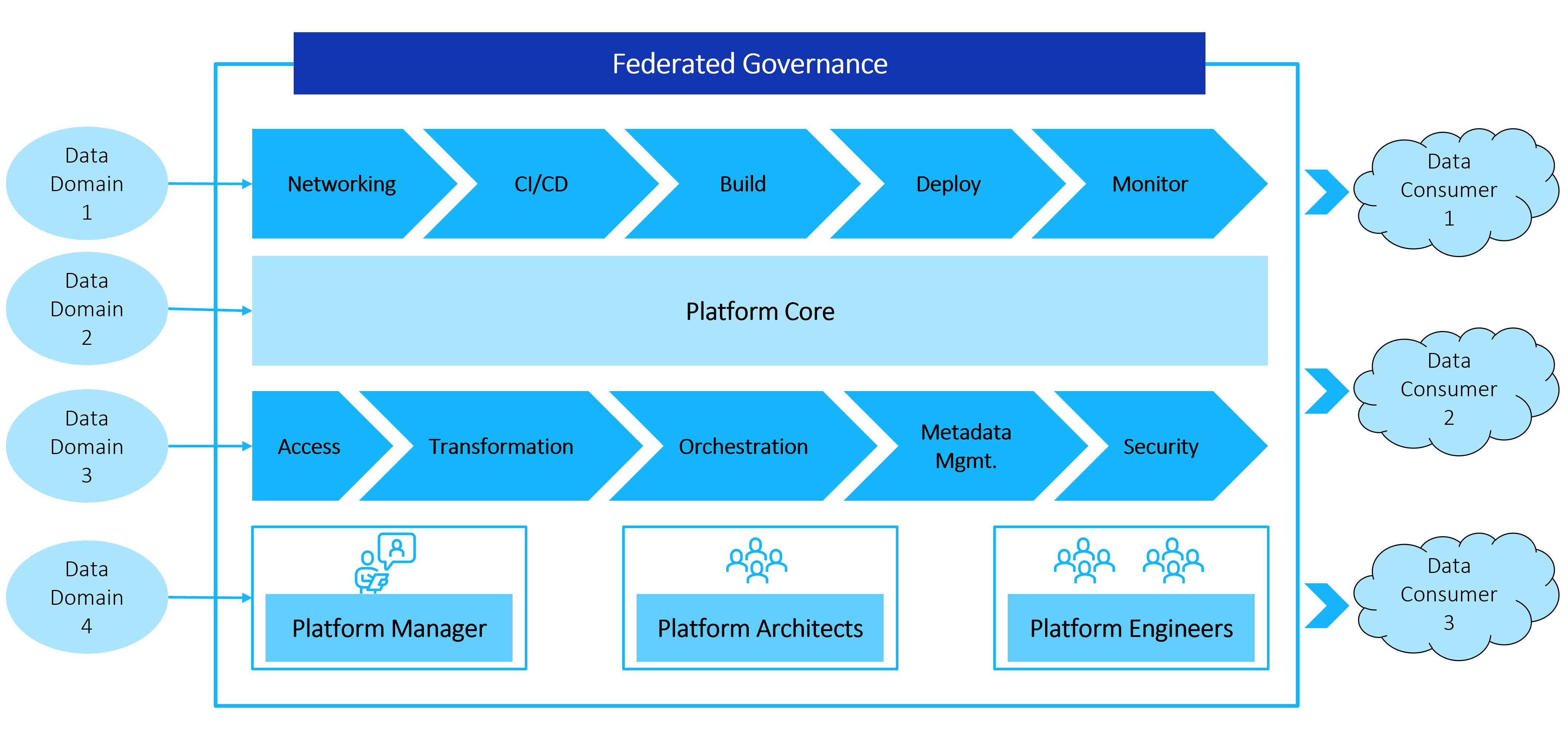

Self-serve data infrastructure as a platform

Domain teams can create, transform, and consume data products autonomously.

Federated governance

Mandated universal standards to enable smooth interoperability and flow of data.

The Data Mesh brings many benefits to the table

Flexibility and Choice – Since its architecture is domain driven and distributed, you have the flexibility to choose vendors and technologies that work best for you, without getting locked onto one platform.

Greater agility, seamless collaboration, shorter project times – Since domain teams own their data, they can operate independently, making them more agile and responsive. At the same time, since the teams are cross-functional, collaboration becomes simpler and more efficient. Development accelerates and projects go live faster!

Superior quality – Since ownership is vested with domain experts, the quality of the data is always high. Further, by mandating universal protocols and principles, the Data Mesh promotes the delivery of data in standardized formats for easier access.

Quick service: Data producers and data users interact based on pre-determined SLAs, which enables much faster delivery of data. All data management needs such as storage, logging, identity management, and such, which slow the process down, are handled by the Data Mesh’s inbuilt capabilities.

Scalability: Being distributed in structure the Data Mesh is also eminently scalable with minimal disruption.

So, should your company upgrade to a Data Mesh?

A Data Mesh certainly sounds like a panacea for all data ills but, like all technology solutions, it must be opted for after due thought and diligence. Keeping the following factors in mind will help you make a better-informed decision about whether your organization needs to upgrade to a data mesh.

Duplication of data: Repurposing data to serve another domain’s needs may lead to data duplication. This can lead to higher storage requirements as well as increased data management costs.

Quality Avoidance: The availability of multiple data products and pipelines may lead to non-compliance with governance standards. Therefore, these principles will need to be clearly articulated and compliance enforced through appropriate measures at the domain level.

Change management efforts: Deploying data mesh architecture and decentralized data operations will entail organization-wide change management efforts. You will need to plan to allow for business disruptions and to ensure that critical operations continue.

Choosing future-proof technologies: Teams will have to think long term when selecting technologies that will be standardized across the company, to ensure easier future upgradation with minimal disruption.

Cross domain analytics: Reporting becomes decentralized as well, and a separate organization wide model may need to be defined to consolidate diverse data products into one report.

Talk to us at ITC Infotech. We’ll undertake an assessment of your existing digital landscape, identify modernization areas, build a strategic roadmap, and define the enterprise architecture you need.

Build a Scalable, Flexible & Secure Data Ecosystem | Enable Insights-powered Decisions | Be Future Ready to leverage AI/ML | Gain Business Intelligence Faster and Cheaper | Enable Rapid Value-led Experimentation | Drive Data-as-a-Service.

Click here for Part 1 of blog: Modernize the Data Ecosystem to Lay the Foundation of an Insights-driven Digital Next Enterprise (Part 1)

Reference:

Zhamak Dehghani

Data Mesh Founder

Author:

Bhagaban Khatai

Data Transformation Leader

Recent Posts

From Signed to Sellable: The New Benchmark for Hotel Onboarding

From Signed to Sellable: The New Benchmark for Hotel Onboarding Architecting the Frictionless Guest Journey Through Agentic AI

Architecting the Frictionless Guest Journey Through Agentic AI AI-Native Enterprise: Trust, Speed, and Intelligence as the New IT Imperative

AI-Native Enterprise: Trust, Speed, and Intelligence as the New IT Imperative Architecting AI-Native OT for Resilient Enterprises

Architecting AI-Native OT for Resilient Enterprises Automation vs AI Value Realization: What CIOs Must Fix First to Unlock Enterprise Value

Automation vs AI Value Realization: What CIOs Must Fix First to Unlock Enterprise Value